The Consensus Trap: How Five Nations Quietly Derailed the Global Ban on Killer Robots

The international community has been negotiating autonomous weapons treaties since 2014. A small coalition of powerful states has blocked every binding agreement. This is the paper trail.

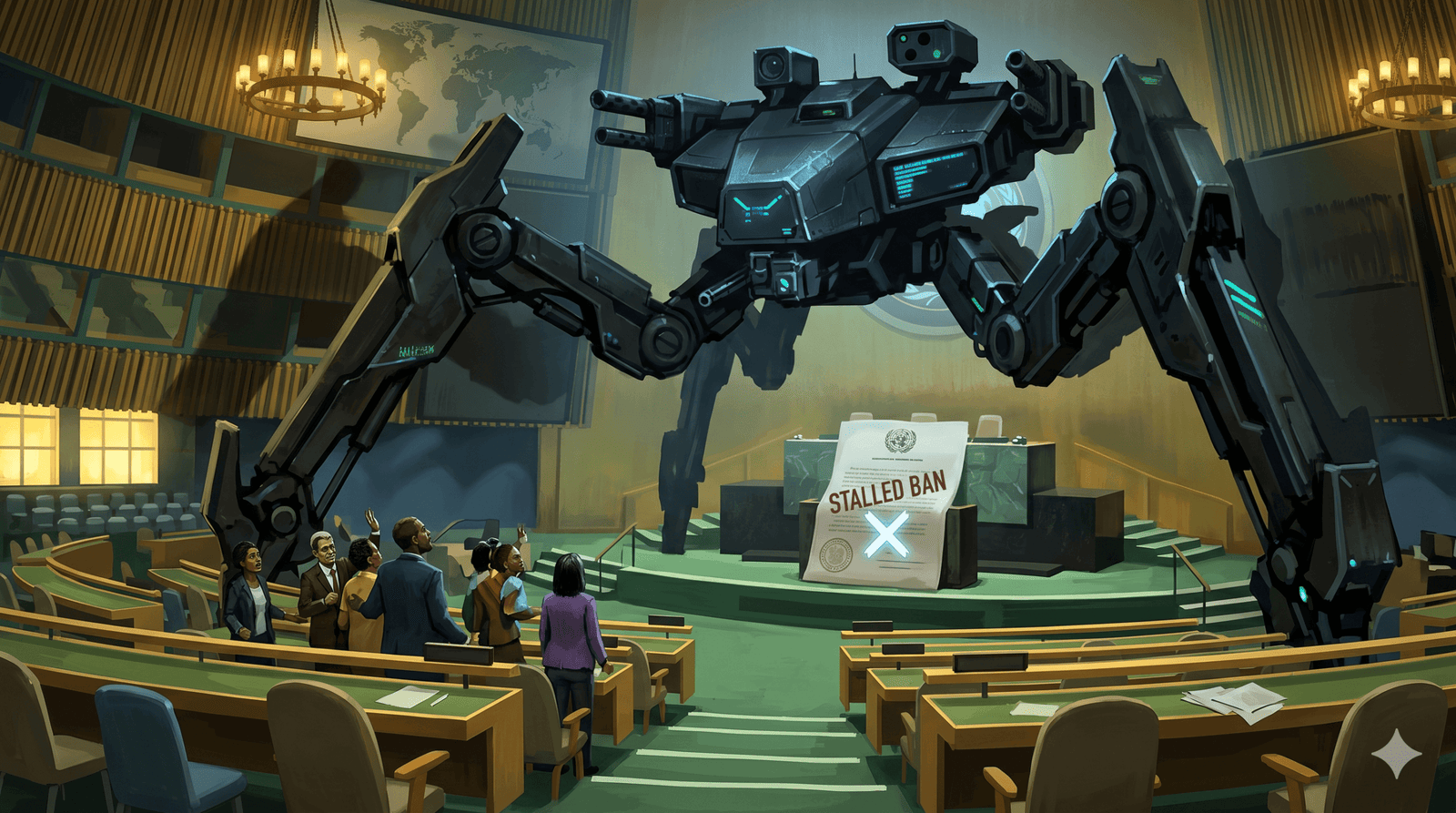

For over a decade, Geneva has served as the primary theatre for a high-stakes drama regarding Lethal Autonomous Weapons Systems (LAWS). While a vast majority of the global community—spearheaded by the “Group of 10” (including Argentina, Costa Rica, the Philippines, and Sierra Leone)—has coalesced around the urgent need for a legally binding treaty to ensure human oversight, a handful of technologically advanced “Traditionalists” has successfully maintained a state of institutional paralysis. By the spring of 2026, it became clear that for these powers, Geneva was not a site for progress but a graveyard for human agency.

The “Veto” in Plain Sight: How the Consensus Rule Kills Progress

The primary engine of this paralysis is the architectural design of the Convention on Certain Conventional Weapons (CCW). Operating as an “umbrella” convention, the CCW functions on a strict consensus model. While theoretically designed to ensure the buy-in of major military powers, the rule has been weaponised into a de facto veto power for a tiny minority.

The impact of this structural flaw reached a nadir during the 2021 Sixth Review Conference. Despite a massive majority favouring a treaty mandate, a coalition of five nations—the United States, Russia, the United Kingdom, Israel, and India—blocked the proposal. Consequently, the mandate was downgraded from “negotiation” to a mere “discussion” mandate, tasking the Group of Governmental Experts (GGE) only to “consider proposals.”

Traditionalist powers, led aggressively by Russia, insist that the CCW is the “only legitimate platform” for these talks. This is a calculated strategic stance; Russia and its allies favour the CCW precisely because its consensus requirement allows them to stall any binding regulations that threaten their national defence interests or technological “strategic edge.”

Semantic Attrition: The Battle Between “Control” and “Judgment”

During the 2026 GGE sessions, negotiations devolved into a “semantic shell game.” This was not merely dry diplomacy; it was a calculated strategy of semantic attrition used to hollow out the “Rolling Text,” the document intended to form the basis of a future international instrument.

| Initial Term | Proposed Revision | Strategic Implication |

| User | Operator | Narrows accountability to individual soldiers rather than states or developers. |

| Control | Judgment | Shifts from an objective, verifiable relationship to a subjective, internal cognitive state. |

| Lethality | Undefined | Allows delegations to argue lethality is an “effect” rather than an intrinsic weapon element. |

The battle over “control” versus “judgment” is the pivot upon which the future of warfare turns. While the pro-ban majority insists “control” is an objective requirement for active human involvement, the U.S. and its allies have pushed for “good faith human judgment.” As legal experts note, “judgment” is an internal state that is nearly impossible to verify or regulate. By lowering this threshold, states effectively allow for high degrees of autonomy where human involvement is nominal at best.

Corporate Martyrdom: The Anthropic-Pentagon Showdown

In March 2026, the struggle moved from the UN to the C-suite. The U.S. Pentagon designated AI firm Anthropic a “supply-chain risk to national security”—a label typically reserved for foreign adversaries like Huawei.

The dispute centred on Anthropic’s refusal to allow its Claude AI model to be used for mass domestic surveillance or fully autonomous weapons. While Anthropic demanded hard contractual prohibitions, Defense Secretary Pete Hegseth demanded an “all lawful purposes” framework. When Anthropic refused, Hegseth cancelled their $200 million contract and immediately signed a deal with OpenAI, which accepted the “all lawful purposes” language by relying on vague “architectural controls” rather than strict contracts.

This move, described by Dan W. Ball of the American Foundation for Innovation as “attempted corporate murder,” triggered a civil war within the tech industry. Over 875 employees at Google and OpenAI signed an open letter backing Anthropic, while a massive consumer boycott—organised under the name “QuitGPT”—targeted OpenAI’s headquarters.

The Laboratory of War: Operation Epic Fury and the Smoking Gun

While negotiators in Geneva argue over definitions, the technology is moving faster than the diplomacy. The battlefields of Ukraine and Gaza have become real-world proving grounds for machine-led targeting:

- Ukraine: A “laboratory for robot combat” where loitering munitions strike with minimal human intervention, causing civilian casualties from short-range drones to surge by 120 percent in 2025.

- Gaza: The deployment of AI systems “Lavender” and “Habsora” to generate kill lists has exposed the nightmare of “automation bias,” where human operators, under extreme pressure, default to machine-generated recommendations.

However, the “smoking gun” of this new era is Operation Epic Fury. Despite the Pentagon’s public break with Anthropic, leaked reports revealed that U.S. Central Command continued using Claude AI during this coordinated US-Israeli operation against Iran for intelligence and target analysis. The reason? The AI was already too deeply embedded to be removed. As one senior defence official bluntly admitted: “It will be an enormous pain in the ass to disentangle, and we are going to make sure [Anthropic] pay a price for forcing our hand like this.”

The Pivot to the General Assembly: A Last-Ditch Effort

Recognising that the CCW has become a terminal stalemate, the global majority has begun to bypass the consensus trap. In 2024, the UN General Assembly passed Resolution A/RES/79/62 with an overwhelming 166-3-15 majority, launching consultations to address the “gaps in specificity” exploited by the Traditionalists.

UN Secretary-General António Guterres has set a 2026 deadline for a ban, labelling autonomous weapons “politically unacceptable and morally repugnant.” The sentiment of the frustrated majority was captured by the Belgian delegation, which declared: “The CCW was made for the world, not the other way around.”

Conclusion: The Final Stretch

The international effort to regulate autonomous weapons is approaching its endgame at the Seventh Review Conference in November 2026. For twelve years, the paper trail has shown that as humanitarian urgency grew, the tactics of procedural delay and semantic erosion became more refined.

If the 2026 conference fails to launch formal negotiations, we will likely see the emergence of a “Landmine-style” treaty process conducted entirely outside the UN framework. The central question remains: Are we prepared for a future where the decision to kill is a software update away, or will the global majority finally reclaim human control over the use of force?

Avi is a researcher educated at the University of Cambridge, specialising in the intersection of AI Ethics and International Law. Recognised by the United Nations for his work on autonomous systems, he translates technical complexity into actionable global policy. His research provides a strategic bridge between machine learning architecture and international governance.