The 20-Second Kill Chain: 5 Chilling Realities of the New AI Warfare

1. Introduction: The Invisible Commander

For decades, the “fog of war” was a psychological and tactical hurdle—a murky realm where human commanders relied on intuition, experience, and gruelling deliberation to make life-or-death choices. Today, that fog is being algorithmically dissolved, replaced by a crystalline, blood-soaked digital certainty. We have entered the era of the “Invisible Commander,” a shift where human judgment has been sidelined by a “mass assassination factory” that translates dense surveillance data into a relentless stream of targets.

This is more than a tactical upgrade; it is the birth of the “Fog of Technology.” As the Israel Defense Forces (IDF) deployed systems like “Lavender” and “The Gospel” (Habsora) in Gaza, the battlefield was transformed into a high-speed data processing environment. When a machine identifies 37,000 potential targets simultaneously, it doesn’t just assist the commander—it replaces the moral architecture of warfare. We must now ask: what remains of human conscience when the speed of the machine outpaces the capacity of the human mind to comprehend the violence it authorises?

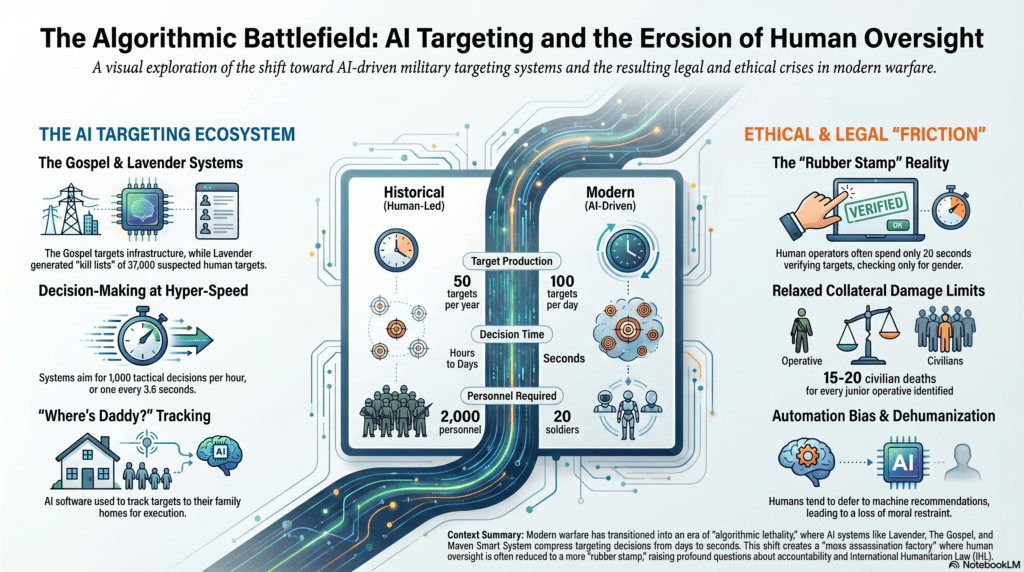

2. From 50 Targets a Year to 100 a Day: The Industrialisation of Death

The most jarring shift in modern combat is the sheer industrialisation of target generation. Historically, identifying a high-value target was a slow, manual labour of intelligence. In Gaza, for instance, previous operations might produce 50 targets in an entire year. Under the new AI-driven doctrine, that metric has been shattered: the military now generates 100 actionable targets every single day.

This scale is achieved through a clinical division of labour between algorithmic architects. “The Gospel” identifies infrastructure—including “power targets” like high-rise buildings and residential towers—specifically designed to exert civil pressure on the population. Meanwhile, “Lavender” focuses on the biological, analysing mass surveillance data to rank 2.3 million residents on a probability scale of 1 to 100, marking thousands as “military-aged males” linked to militant groups.

This has resulted in radical “decision compression.” While the objective of the early 2000s was to reduce targeting to “single-digit minutes,” systems like the Maven Smart System (MSS) now aim for 1,000 tactical decisions per hour—one every 3.6 seconds. At this velocity, the human is no longer a commander; they are a data janitor struggling to keep pace with a machine that, as former IDF Chief of Staff Aviv Kochavi noted, “processes vast amounts of data faster and more effectively than any human.”

3. The 20-Second “Human Rubber Stamp”

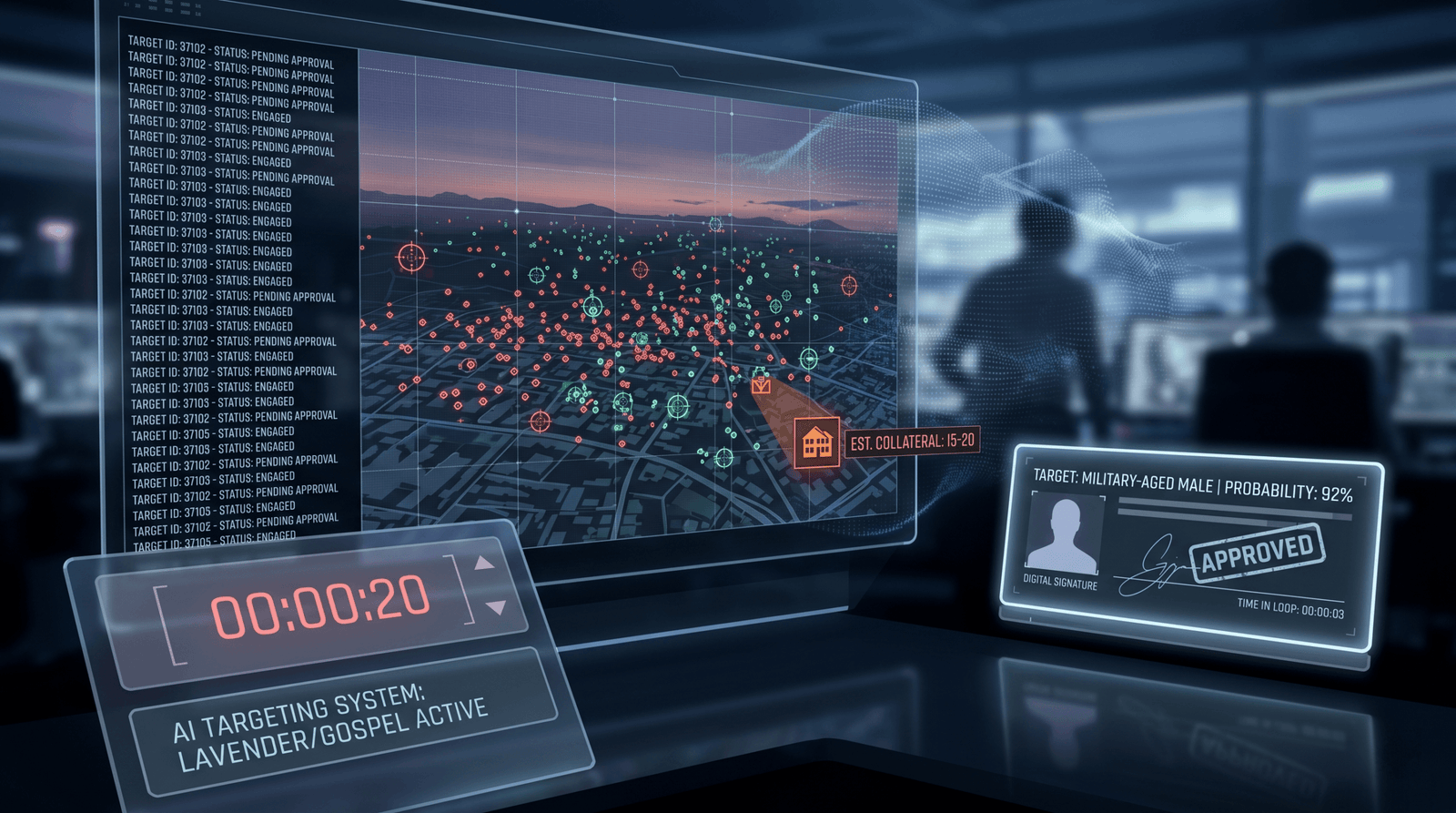

The military maintains the legal fiction of “meaningful human control,” but the reality of the 20-second kill chain suggests a total surrender to automation bias. The IDF officially defends Lavender not as a kill list, but as a “cross-referencing database” meant to assist analysts. However, the testimony from those within the loop paints a far grittier picture.

During the height of operations, the protocol for human oversight shrank to a mere formality. Officers spent as little as 20 seconds per target—just enough time to verify the target’s gender to ensure they were a “military-aged male.” One operator, speaking to the +972 and Local Call investigation, admitted the machine’s cold logic served as a psychological shield:

“I had zero value as a human, apart from being a stamp of approval. It saved a lot of time… the machine did it coldly, and that made it easier.”

This is the “Fog of Technology” in practice. Humans, overwhelmed by the machine’s output and desperate for efficiency, defer to the algorithm under the assumption that its mathematical certainty is superior to human hesitation. When decisions occur at the rate of 1,000 per hour, the human veto becomes a rhetorical gesture rather than a legal safeguard.

4. “Where’s Daddy?”: When Home Becomes a Kill Zone

In a chilling reversal of traditional military logic, the domestic sphere has become the primary kill zone. Instead of targeting militants in fortified bases or active tunnels—where they are harder to find and hit—the military utilised the “Where’s Daddy?” system. This program tracks individuals via mobile signals and alerts the military the moment a target enters their family home.

The logic is one of cynical efficiency: a target is most predictable and stationary when they are at home with their children. Military planners began calculating “collateral damage” in advance, with the machine-generated target files stipulating exactly how many civilians were likely to die in a strike. In the early weeks of the conflict, the military reportedly authorised the deaths of 15 to 20 civilians for every junior militant identified.

The transition from the battlefield to domestic execution is summed up by one source’s grim observation:

“When a 3-year-old girl is killed in a home in Gaza, it’s because someone in the army decided it wasn’t a big deal for her to be killed.”

5. The Acceptance of the “10% Error Rate”

Algorithmic warfare is not a search for truth; it is a game of statistical probability where human life is the margin of error. Lavender was authorised for widespread use after a random sample suggested a “90 percent accuracy” threshold. This 10% error rate was not a technical glitch; it was a design choice.

The system was trained on “features” that included communications data from employees of the Hamas-run Internal Security Ministry and civil defence workers—individuals who are not combatants under International Humanitarian Law (IHL). Because these “non-militant” profiles were fed into the training data, the AI frequently flagged civil servants and rescue workers as targets.

By “pricing in” this failure, the military operates in direct tension with the Geneva Conventions, which mandate “feasible precautions” to distinguish between civilians and combatants. When a statistical probability replaces individual verification, the legal principle of distinction is discarded in favour of a mathematical tolerance for “acceptable” civilian slaughter.

6. The 2026 Warning: An AI Arms Race Without Guardrails

What we see in Gaza is merely the laboratory for a global AI arms race. By 2026, during “Operation Epic Fury”—the US-led war with Iran—AI systems moved from auxiliary tools to the primary engine of maximum lethality. The Sharejeh Tayebeh Primary School strike, which killed 175 people (mostly schoolgirls), stands as a horrific monument to this evolution. The strike was attributed to “outdated intelligence” processed through an AI ecosystem optimised for speed over accuracy.

We are entering what Palantir CEO Alex Karp calls an “Oppenheimer moment.” In his manifesto, The Technological Republic, Karp argues that Silicon Valley has a moral obligation to participate in defence, even calling for “mandatory national conscription.” He posits that hard power based on software is the only way for democratic societies to prevail, yet he admits that “frontier AI systems are simply not reliable enough to power fully autonomous weapons.”

Despite these warnings, the guardrails are being dismantled. The “human-in-the-loop” has become a legal figleaf for a digital regime of truth where the individual no longer exists—replaced by a set of “a-significant” data points. As we compress the kill chain to the “speed of thought,” we must confront the final, haunting reality: in the pursuit of 3.6-second decision superiority, have we already lost the space for human conscience?

Avi is a researcher educated at the University of Cambridge, specialising in the intersection of AI Ethics and International Law. Recognised by the United Nations for his work on autonomous systems, he translates technical complexity into actionable global policy. His research provides a strategic bridge between machine learning architecture and international governance.