The Algorithmic Battlefield: 5 Takeaways from the Silent Revolution in Warfare

In April 2024, hundreds of Google and Amazon employees stood outside their corporate offices to protest Project Nimbus, a $1.2 billion cloud computing contract with the Israeli government. To the public, these companies are “ethical innovators,” branding themselves as stewards of a digital future defined by “AI for Good.” However, as the dust settles over modern conflict zones, it is becoming clear that the “rules-based order” is not being protected by these technologies—it is being dissolved by them.

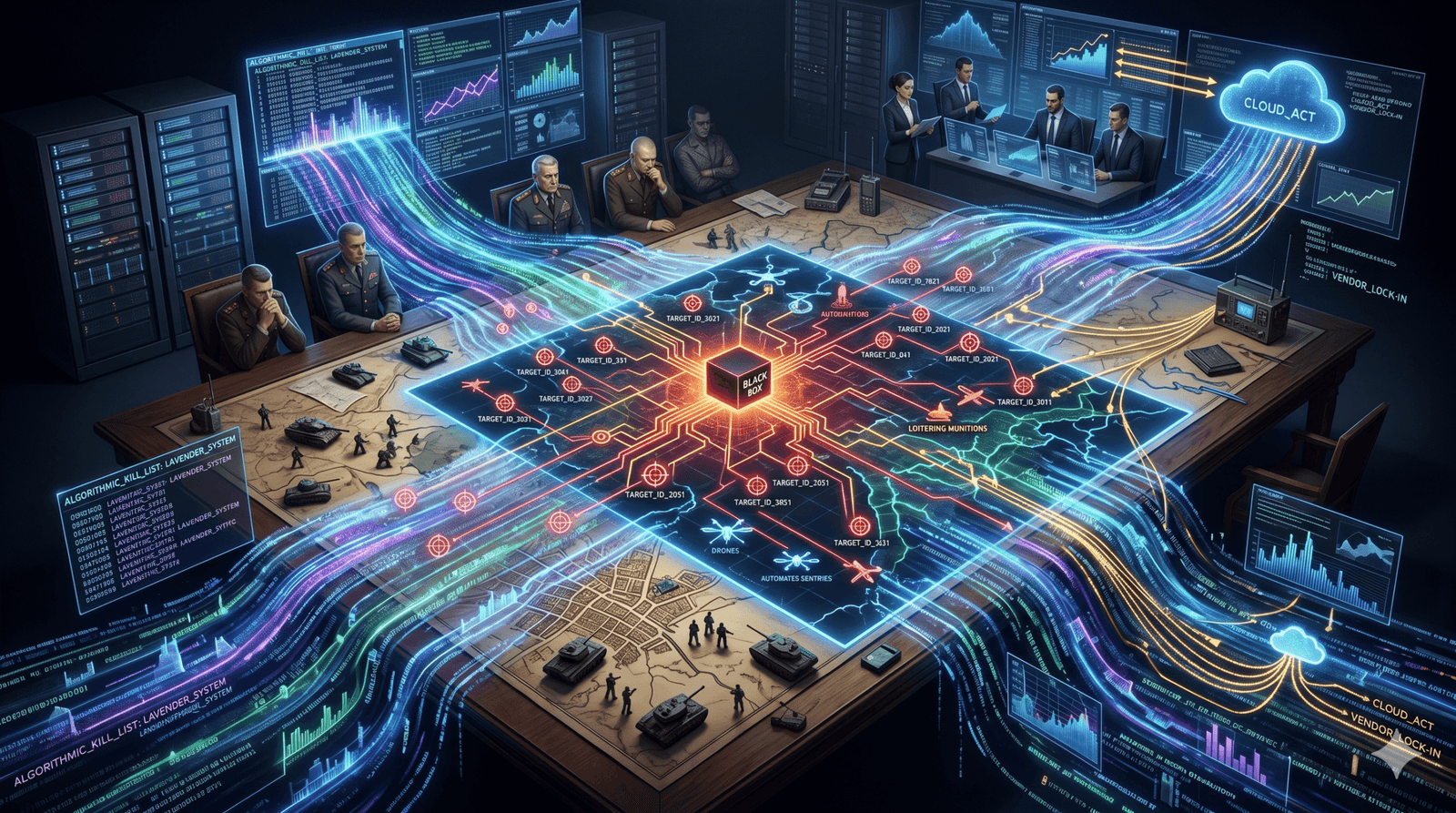

The reality of the algorithmic battlefield is that the veneer of corporate ethics has been stripped away, revealing a new military-industrial-tech complex where Silicon Valley’s proprietary code is the primary engine of violence. This is a silent revolution, one where the speed of software has outpaced the slow deliberations of international law. As we move from human-led decision-making to a “Digital Targeting Web,” the distinction between a software vendor and a combatant has ceased to exist.

Beneath the rubble of Gaza and the trenches of Ukraine lie 13 petabytes of data—a staggering repository of human life transformed into targeting metadata. This is a deep dive into the “black box” of modern warfare and the five critical takeaways from the revolution we are currently living through.

1. Machines Now Own the “Critical Functions” of Violence

The fundamental shift in 21st-century warfare is the transition of “critical functions”—the selection and engagement of targets—from human judgment to autonomous weapon systems (AWS). The era of the “man in the loop” is ending; we are entering the era of the machine as the decision-maker.

The International Committee of the Red Cross (ICRC) defines these systems with clinical precision:

“Any weapon system with autonomy in its critical functions—that is, a weapon system that can select (search for, detect, identify, track or select) and attack (use force against, neutralise, damage or destroy) targets without human intervention.”

This isn’t science fiction. These systems are already integrated into current Intelligence Surveillance & Reconnaissance (ISR) systems. From missile and rocket defence shields to loitering munitions and automated “sentry” weapons, autonomy is the new baseline. Once activated, the system’s sensors and software determine the target. The machine identifies a signature—a heat map, a radio frequency, or a face—and “prosecutes” it.

The technical challenge is that as these systems become more complex, incorporating “Ontology”—the mapping of relationships between disparate data points—the ability for a human to provide “meaningful control” vanishes. When a machine operates at the speed of light to neutralise a threat, the human operator becomes a spectator to a “kill chain” they no longer truly direct.

2. The Rise of the “Kill List” and the Efficiency of Genocide

If Ukraine provided the testing ground for AI speed, Gaza has become the laboratory for its lethal scale. The Israeli military has transitioned into a total “AI war,” utilising a specialised workflow of destruction through three primary systems: The Gospel, Lavender, and Where’s Daddy.

The Gospel acts as a factory for target generation, identifying buildings and infrastructure at a rate no human analyst could match. Lavender then processes mass surveillance data—intercepted calls, social media, and biometric records—to rank individuals on a “kill list.” Finally, Where’s Daddy tracks these individuals in real-time, often alerting the military when they have returned to their family homes.

The technical efficiency is horrifying. In the early months of the conflict, the Israeli military’s reliance on Microsoft Azure’s machine-learning tools saw a 64-fold increase compared to pre-war levels. More than 13 petabytes of data—equivalent to millions of hours of surveillance—were funnelled into Microsoft servers to fuel these algorithms.

The result is what military officials chillingly refer to as “garbage targets.” Because the system prioritises the speed of the “kill chain,” the threshold for a “positive ID” has collapsed. For the Lavender system, reports indicate the only human check applied to the automated list was the verification of the target’s gender. If the algorithm flagged a Palestinian male, he was considered a combatant.

“AI has enabled the Israeli regime’s genocidal logic to be carried out with machine-driven efficiency—reducing Palestinians, their families, and their homes to what the military chillingly calls ‘garbage targets’.”

This machine-driven logic creates a “speed of targeting” paradox. In Ukraine, Palantir’s software reportedly reduced the time from target detection to “prosecution” from six hours to just two or three minutes. In Gaza, this same speed has been used to automate the targeting of tens of thousands, where an error rate of even 10% translates into thousands of “mistaken” civilian deaths.

3. The Sovereignty Paradox: Why Switzerland Said “No” to Palantir

While some nations sprint toward automation, others are realising that digital “efficiency” often requires the surrender of national sovereignty. This is the “Sovereignty Paradox”: a nation-state cannot own its defence if it does not own the code its defence runs on.

In December 2024, the Swiss military completed a 20-page “precautionary risk assessment” of Palantir’s Asset Readiness module, a platform intended for military logistics. Their conclusion was a warning to the world: because Palantir is a US-headquartered firm, its data is subject to the US CLOUD Act. This law requires American tech companies to disclose data to the US government regardless of where that data is physically stored. The Swiss concluded that US government access was technically unpreventable and that the use of such proprietary systems would lead to terminal “vendor lock-in.”

Contrast this with the United Kingdom, which has essentially “given the keys to the kingdom” to Palantir. The UK has awarded the firm more than £900 million in contracts, spanning the NHS, the Ministry of Defence, and even nuclear weapons management at AWE Nuclear Security Technologies.

The UK’s integration is characterised by a lack of transparency. On December 30, 2025, the MoD signed a £240.6 million Enterprise Agreement with Palantir—awarded without procurement competition under a defence exemption. This contract integrates Palantir into the “Digital Targeting Web,” the heart of the UK’s Strategic Defence Review.

This creates a “compliance double-bind.” Under Article 48 of the UK GDPR, companies cannot legally transfer data to a third country based on a foreign court order. Yet, under the US CLOUD Act, Palantir must comply with US warrants. By outsourcing its core data infrastructure to a foreign-incorporated “black box,” the UK has created a structural dependency that may prevent it from acting independently in a crisis.

4. Silicon Valley is Joining the Military Command

We are moving past the era of the defence contractor and into the era of the “corporate commander.” The relationship between Big Tech and the military is no longer a vendor-client transaction; it is, as Benjamin Netanyahu once described the relationship between Israel and Microsoft, “a marriage made in heaven.”

An unprecedented indicator of this marriage is the US Army’s decision to grant senior executives from companies like Palantir, Meta, OpenAI, and Thinking Machines Labs the rank of “lieutenant colonel.” These executives act as formal advisors, embedded within the military structure to “guide rapid and scalable tech solutions.”

This integration is reinforced by a “revolving door” of power. In the UK, the optics are equally startling. Former MoD Director of Policy Barnaby Kistruck joined Palantir in 2025, just days after leaving the ministry. Meanwhile, Lord Peter Mandelson, whose firm Global Counsel has represented Palantir, has been seen accompanying the Prime Minister to Palantir’s Washington headquarters.

When corporate executives hold military rank and lobbyists sit in on high-level defence meetings, corporate logic becomes the architecture of warfare. The priorities of the boardroom—optimisation, scale, and the protection of proprietary “Ontology”—become the priorities of the battlefield. The military is no longer just using tech; it is being “re-architected” by it.

5. The “Black Box” Accountability Gap

The final takeaway is the total collapse of accountability. International Humanitarian Law (IHL) is built on the premise that a human is always responsible for a strike. However, the use of proprietary algorithms creates a “black box” that prevents any meaningful audit of lethal decisions.

The ICRC points to the Martens Clause, a legal safeguard stating that in cases not covered by treaties, populations remain protected by the “principles of humanity” and the “dictates of the public conscience.” There is a deep, instinctive discomfort with a machine making a life-and-death decision. Yet, when a “mistake” occurs—such as the precision-targeting of World Central Kitchen aid vehicles in Gaza—the complexity of the system provides a layer of plausible deniability.

The programmer built the model; the commander relied on the “AI-powered” analytics; the operator simply pushed the button the machine told them to push. In this loop, no one is responsible. This gap is widened by the candid, if chilling, admissions of tech leaders. Palantir CEO Alex Karp has openly admitted:

“Our product is used on occasion to kill people.”

Karp has further described the power of advanced algorithmic warfare as the “digital equivalent of a tactical nuclear weapon.” If these systems are indeed as powerful as nuclear weapons, they are currently the only such weapons in history that are managed by private corporations, governed by secret code, and deployed without a single international treaty to regulate them.

Conclusion: The Future of the “Seeing Stones”

The name “Palantir” refers to the “seeing stones” of Middle-earth—tools that allow the user to see across vast distances, but which are also easily corrupted by a dark, centralised power. It is a fitting metaphor for the current revolution.

Warfare has become the ultimate prototype for automated, unregulated force. We are witnessing a world where national sovereignty is traded for proprietary cloud contracts, and where individuals are algorithmically reduced to “garbage targets.”

The fundamental question for our era is whether a society can remain truly democratic if its most sensitive decisions—who is a friend, who is an enemy, and who is allowed to live—are managed by secret, proprietary algorithms that are beyond public audit or human appeal. As the “seeing stones” of Big Tech become the primary infrastructure of our survival, we must decide: are we using these tools to secure our future, or are we simply helping them hunt us down?

Avi is a researcher educated at the University of Cambridge, specialising in the intersection of AI Ethics and International Law. Recognised by the United Nations for his work on autonomous systems, he translates technical complexity into actionable global policy. His research provides a strategic bridge between machine learning architecture and international governance.