Palantir Gotham

Palantir Gotham: Technical Dossier & Legal Analysis

Lead Paragraph: Palantir Gotham is a foundational data integration and AI decision-support platform designed to fuse massive, disparate intelligence streams into actionable targeting directives. Originally developed for the US Intelligence Community and now deployed extensively in conventional warzones like Ukraine, Gotham acts as the algorithmic connective tissue of modern warfare, accelerating the “kill chain” to unprecedented speeds. By gamifying lethal decision-making and transforming raw surveillance into automated targeting options, Gotham exemplifies the dangerous shift toward algorithmic complicity, where human commanders increasingly operate as mere rubber stamps for machine-generated violence.

⚙️ Technical Specifications & Capabilities

| Parameter | Specification |

| Manufacturer | Palantir Technologies |

| State Actor / Primary User | US Dept. of Defense, US Intelligence Community, Ukraine Armed Forces, NATO Allies |

| System Type | AI Decision Support System / Intelligence Data Fusion Platform |

| Data Inputs | SIGINT, OSINT, VISINT (Satellite imagery, full-motion drone video), HUMINT, financial and telecom records |

| Processing Capacity | Global-scale spatiotemporal data fusion; integrates 3rd-party AI/ML models in real-time |

| Output Mechanisms | Unified geospatial dashboards, predictive threat modeling, and automated target assignment |

| Operational Scale | Theater-wide multi-domain operations; utilized globally by military and law enforcement |

🧠 Algorithmic Architecture & Autonomy

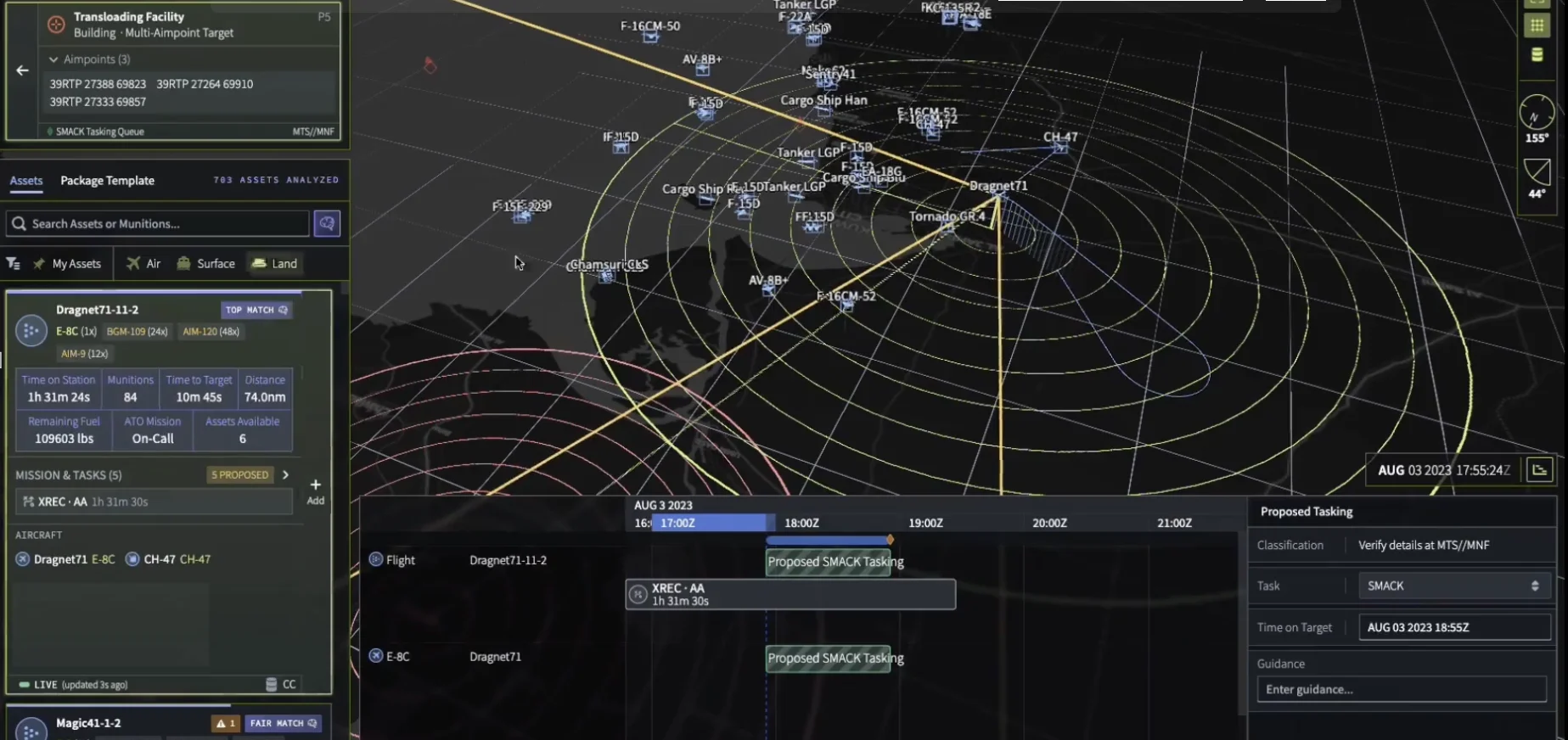

Gotham’s core architecture functions as an expansive, constantly updating digital twin of the battlefield. It ingests petabytes of unstructured data—ranging from drone surveillance footage and radar tracks to intercepted communications and civilian cell phone metadata—and pieces together seemingly unrelated nodes into a coherent relationship network. By leveraging advanced computer vision models (such as those developed under the US DoD’s Project Maven), Gotham’s AI modules can autonomously scan incoming feeds to identify, classify, and track enemy formations, vehicles, and high-value individuals without human prompting.

Once threats are algorithmically identified, Gotham accelerates the targeting process. The system cross-references the target’s coordinates with friendly unit locations, available munitions, and logistical data to automatically calculate firing solutions. It then assigns the target to the specific weapon system or unit that offers the optimal “cost-performance ratio.” In platforms like the US Army’s TITAN (Tactical Intelligence Targeting Access Node)—which heavily relies on Palantir software—the system delivers this “AI at the edge,” distributing automated targeting solutions directly to frontline operators.

In the terminal phase of the kill chain, Gotham does not possess the physical capability to launch a munition autonomously; it requires a human to authorize the strike. However, the system engineers a state of severe automation bias. The platform presents war as a clean, sanitized digital spectacle—a dashboard where a commander is fed pre-calculated firing solutions. While a human formally “pulls the trigger,” the cognitive labor of identifying, verifying, and prioritizing the target has been entirely surrendered to the algorithm. This dynamic fundamentally degrades Meaningful Human Control (MHC), reducing the human operator to a veto mechanism rather than a deliberate decision-maker.

🔗 Deployment History & OSINT Verification

Gotham has been deeply integrated into the US military and intelligence apparatus since the occupations of Iraq and Afghanistan, but its true algorithmic warfare capabilities were battle-tested and refined during the Russo-Ukrainian War. In Ukraine, Palantir software merged commercial satellite imagery, classified intelligence, and crowdsourced data to provide near-real-time battlefield views, allowing Ukrainian forces to systematically target Russian assets with unprecedented speed. Furthermore, the US military has aggressively expanded its reliance on Palantir through multi-million dollar contracts for AI integration. Domestically, customized versions of Gotham have been utilized by US Immigration and Customs Enforcement (ICE) to track undocumented immigrants, and by various police departments for predictive policing—deployments that have drawn severe criticism from civil liberties organizations.

⚖️ Legal Status & IHL Implications

- Article 36 Compliance: N/A (Software Loophole). Because Gotham is technically classified as an intelligence-fusion software platform rather than a standalone kinetic weapon, it largely evades the strict legal weapons reviews mandated by Article 36 of Additional Protocol I to the Geneva Conventions. This creates a massive accountability gap for systems that dictate lethal force.

- Principle of Distinction: While marketed as a tool of ultimate precision, Gotham’s ability to distinguish between combatants and civilians is entirely reliant on the cleanliness of its data inputs. In asymmetric environments, integrating “noisy” data—such as behavioral patterns or proximity to suspects—can result in algorithmic false positives. If the data is flawed, the algorithm generates unlawful targets, leading to civilian casualties under the guise of mathematical certainty.

- Algorithmic Complicity / Human Rights: Gotham facilitates a profound moral detachment from the act of killing. By reducing the chaos of war to a predictable, gamified interface, it accelerates the kill chain beyond the limits of human ethical deliberation. Its dual-use deployment in predictive policing and immigration tracking further demonstrates its capability to operationalize mass surveillance and digital dehumanization at a systemic level, shielding systemic biases behind proprietary corporate algorithms.

Closing Thought: The unchecked proliferation of data-fusion platforms like Palantir Gotham demands an immediate revision of international law to classify AI targeting backbones as weapon systems, subjecting them to strict regulatory oversight and ensuring human commanders cannot outsource their legal and moral accountability to an algorithm.

Avi is a researcher educated at the University of Cambridge, specialising in the intersection of AI Ethics and International Law. Recognised by the United Nations for his work on autonomous systems, he translates technical complexity into actionable global policy. His research provides a strategic bridge between machine learning architecture and international governance.